Abstract

Modern foundational Multimodal Large Language Models (MLLMs) and video world models have advanced significantly in mathematical, common-sense, and visual reasoning, but their grasp of the underlying physics remains underexplored. Existing benchmarks attempting to measure this matter rely on synthetic Visual Question Answer templates or focus on perceptual video quality that is tangential to measuring how well the video abides by physical laws.

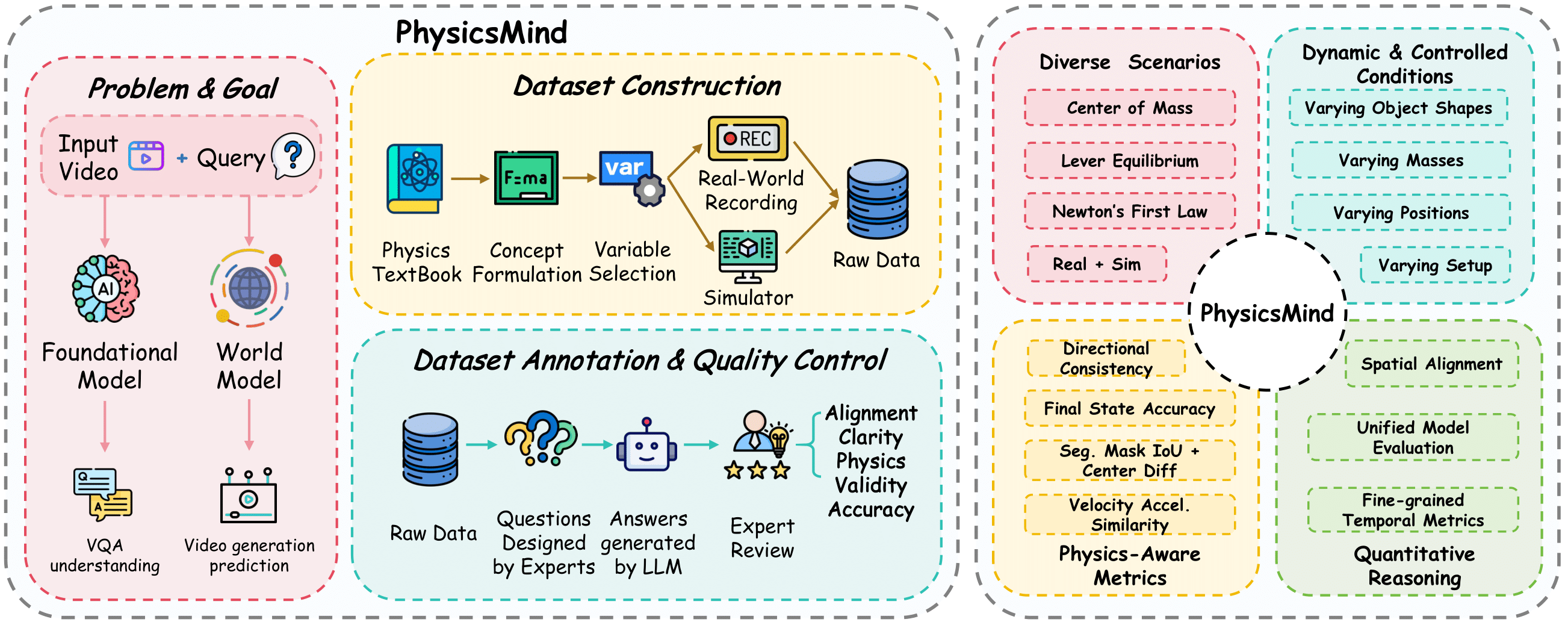

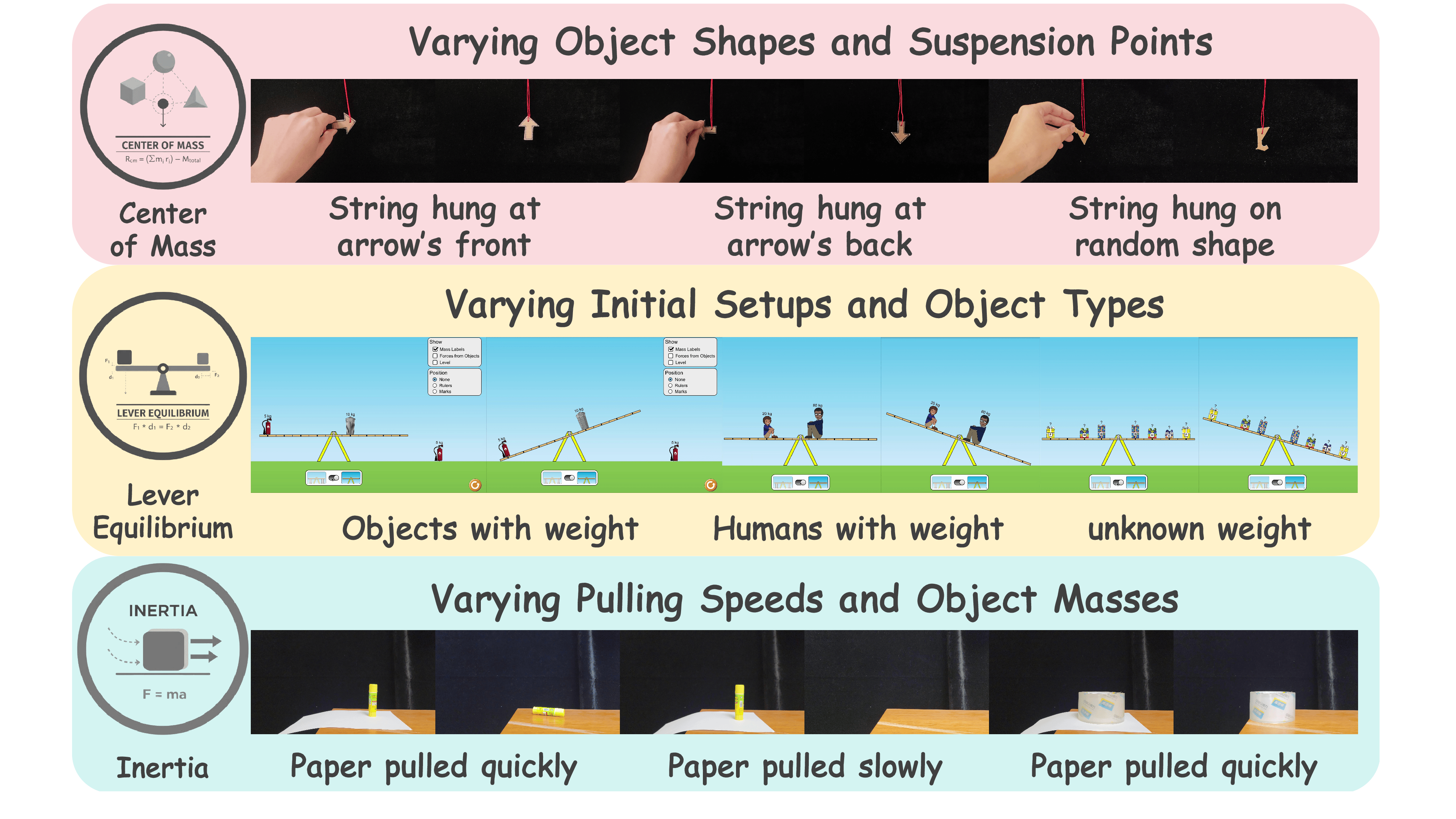

To address this fragmentation, we introduce PhysicsMind, a unified benchmark with both real and simulation environments evaluating both physical reasoning and physically plausible generation of three canonical physical laws: center of mass, lever equilibrium, and Newton's first law.

PhysicsMind comprises two main tasks:

- VQA tasks: Testing whether models can reason and determine physical quantities and values from images or short videos.

- Video Generation (VG) tasks: Evaluating if predicted motion trajectories obey the same center-of-mass, torque, and inertial constraints as the ground truth.

A broad range of recent models is evaluated on PhysicsMind and found to rely on appearance heuristics while often violating basic mechanics. These gaps indicate that current scaling and training are still insufficient for robust physical understanding.

Framework & Analysis

Figure 1. The PhysicsMind framework combines foundational models with physics-guided dataset construction, expert-verified annotations, and diverse controlled scenarios.

Figure 2. Three canonical mechanics scenarios: Center of Mass, Lever Equilibrium, and Newton's First Law, tested in Real and Sim environments.

Video Generation Analysis

Qualitative comparisons of Video Generation models against Ground Truth.

[cite_start]Comparing temporal stability and physical realism. [cite: 2313-2315]

[cite_start]Evaluating temporal consistency and contact. [cite: 2322-2324]

[cite_start]Checking if the final state matches torque balance. [cite: 1902-1903]

Experimental Results

1. VQA Physics Evaluation

| Model | Center of Mass (CoM) | Lever Equilibrium (LE) | Newton's First Law (NI) | ||||||

|---|---|---|---|---|---|---|---|---|---|

| Position | Rotation | Overall | Equilibrium | Bal. Adj. | Overall | Obj. Pos. | Stability | Overall | |

| GPT-5 [30] | 60.00 | 80.00 | 70.00 | 66.67 | 76.19 | 70.00 | 60.00 | 95.00 | 77.50 |

| o4-mini [29] | 50.00 | 55.00 | 52.50 | 28.57 | 85.71 | 52.50 | 75.00 | 95.00 | 85.00 |

| GPT-4o [27] | 40.00 | 35.00 | 37.50 | 42.86 | 54.76 | 47.50 | 45.00 | 85.00 | 65.00 |

| Claude 4.5 Sonnet [3] | 45.00 | 20.00 | 32.50 | 61.90 | 61.90 | 59.52 | 40.00 | 90.00 | 65.00 |

| Gemini 2.5 Pro [9] | 30.00 | 70.00 | 50.00 | 61.90 | 52.38 | 57.14 | 20.00 | 45.00 | 32.50 |

| Qwen2.5-VL-72B [36] | 30.00 | 55.00 | 42.50 | 54.76 | 52.38 | 52.38 | 42.86 | 85.00 | 62.50 |

Table 1. Selected VQA Physics Evaluation Results. Values represent accuracy in percentage (%).

2. Video Generation Physics Evaluation

| Model | Center of Mass | Lever Eq. | Newton's First Law | ||

|---|---|---|---|---|---|

| Mask IoU ↑ | Center Diff ↓ | Final Acc (%) ↑ | Traj. RMSE ↓ | Dir. Consistency ↑ | |

| Veo3.1 [13] | 0.019 | 108.39 | 35.0 | 1.384 | 0.5419 |

| Sora-2 [31] | 0.167 | 121.42 | 40.0 | 0.380 | 0.5494 |

| LTX-Video [14] | 0.005 | 76.37 | 4.76 | 0.406 | 0.5594 |

| CogVideoX1.5 [42] | 0.014 | 223.70 | 38.1 | 0.414 | 0.4884 |

| Pyramid Flow [17] | 0.012 | 322.97 | 47.6 | 0.381 | 0.6437 |

| Cosmos-predict2 [24] | 0.009 | 217.33 | 42.9 | 0.350 | 0.4884 |

Table 2. Video generation physics evaluation. Metrics include Segmentation Mask IoU (higher is better), Center Difference (lower is better), and Trajectory RMSE (lower is better).

BibTeX

@article{PhysicsMind2026,

title={PhysicsMind: Sim and Real Mechanics Benchmarking for Physical Reasoning and Prediction in Foundational VLMs and World Models},

author={Mak, Chak-Wing and Zhu, Guanyu and Zhang, Boyi and Li, Hongji and Chi, Xiaowei and Zhang, Kevin and Wu, Yichen and He, Yangfan and Fan, Chun-Kai and Lu, Wentao and Ge, Kuangzhi and Fang, Xinyu and He, Hongyang and Lu, Kuan and Xu, Tianxiang and Zhang, Li and Ni, Yongxin and Li, Youhua and Zhang, Shanghang},

journal={Under Review},

year={2026}

}